The AI Takeover in Higher Education

How artificial intelligence is redefining college learning

—By Lexi Davis

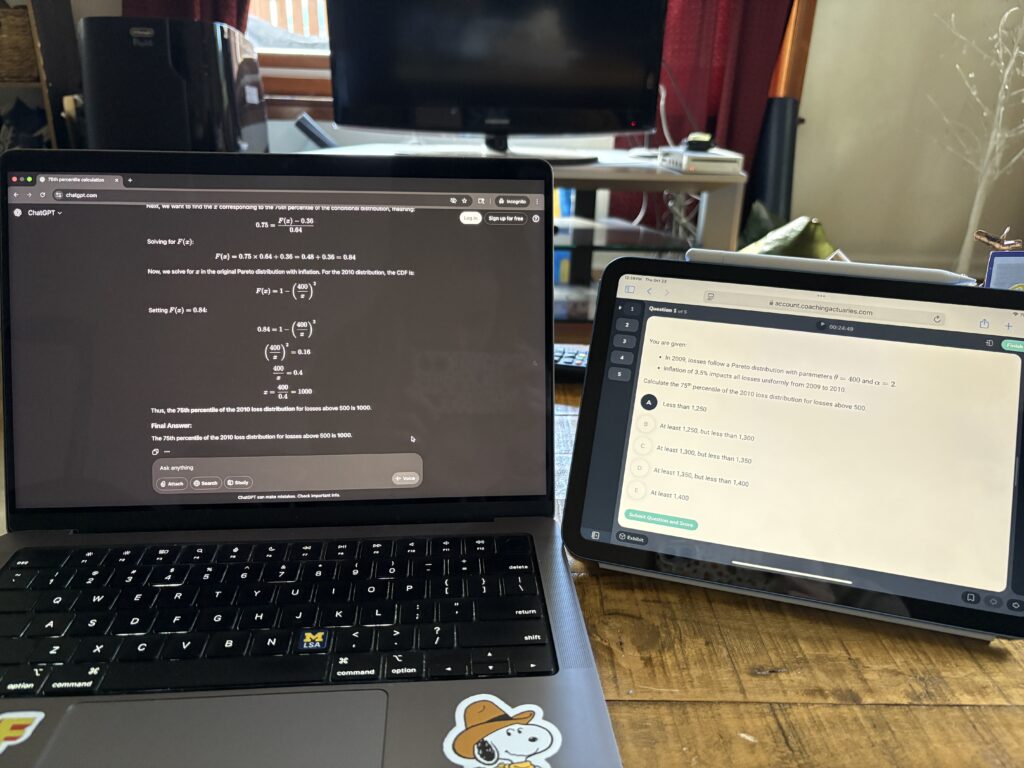

It’s 11 P.M. in a crowded library. After working tirelessly on an essay for weeks, you make the final edit of the final draft, only to look over and see your classmate copying and pasting the same essay prompt into ChatGPT. With access to ever-powerful AI, how are college classrooms changing? And, how is student learning being affected?

Generative AI tools like ChatGPT, Copilot, and Gemini can create content like text, images, audio, or video in response to a prompt. These systems are trained on massive pools of information, allowing them to learn patterns from data and create new content for the user. Although capabilities seem limitless, “generative AI can still compose potentially incorrect, oversimplified, unsophisticated, or biased responses to questions or prompts,” according to the University Center for Teaching and Learning at the University of Pittsburgh.

A 2024 survey from Digital Education Council found that 86% of students use AI in their studies, with nearly one in four students reporting to use it daily. A survey of faculty at Northeastern University found that the most common concerns about AI included the potential for cheating, over-reliance on AI, and ethical concerns. Thus, use of generative AI has been a highly discussed topic among those in higher education, with professors scrambling to create policies and ways of teaching that restrict its use by students.

How does generative AI affect learning?

Hanna Bennett, U-M Undergraduate Program Director for Mathematics, claims that the fundamental goal of a class is for students to learn and develop skills. “I’m concerned when the skills we’re trying to teach students are things that they can just put into a program and have it spit out an answer,” she says.

Bennett claims that programs like ChatGPT prevent students from thinking through problems. When students are feeling “stuck” on a problem, they may turn to a GenAI tool to help them get “unstuck,” keeping them from working through that frustration and learning something new.

Monroe Moody, U-M Interim Director for the English Department Writing Program, says something similar. “My concern, mostly with Chat[GPT], is that if students are using Chat to do the writing, they are also using Chat to do the thinking.”

Lyric Williams, a senior at Michigan State University, discusses an instance regarding AI in one of her science classes regarding fact and fiction. In the beginning of the course, they were given a simple assignment in which they must come up with a conspiracy theory. “Our professor gave us the parameters that it could be literally anything. We get to use our imagination,” she explains. She recalls that the girl sitting next to her said “I can’t think of anything. I’m just going to ask ChatGPT.” Williams reflects on this experience, saying, “It is so disheartening to watch that happen because using our imaginations is one of the foundational things that make us human.”

Beyond obstructing learning, AI tools may not even provide students with accurate solutions to the prompts they enter, especially in math. According to an article in Medium by Adnan Masood, Large Language Models (LLM) like ChatGPT and Gemini are “stochastic sequence models” that predict words based on previous words. Although the answer given may look right, the constraints posed by this architecture hinders mathematical reasoning; it has no way of checking whether the math is correct or not.

Using ChatGPT to solve math problems will lead to inaccurate solutions, an issue that is concerning now more than ever. According to the U.S. Education Department, American twelfth-graders’ average math score in 2025 was the worst since the current test began in 2005. With the rise of AI to solve problems for students, there is no doubt that this trend will continue.

“So many students are using it as a stepping stone towards their degree and missing out on vital experiences that will help them in their job fields,” Williams says. She mentions that some professors are even encouraging the use of AI, referencing a professor recommending AI for schedule creation. But, she says most professors are discouraging its use, creating new policies and standards for their students.

Moody has a policy to hold students responsible for their AI use. Students are allowed to use AI, but they need to document and disclose how they used it. “If they are going to use AI then they are responsible for anything that they turn in,” they say. “If AI is fabricating sources or giving false information, which it often does, the student is responsible for that.”

One effect of growing AI use, Bennett notes, is the shift regarding community among students. “One thing we have seen is a lot fewer students going to our tutoring center,” she says. She also notes a shift from students working together on homework to using ChatGPT. “It makes me sad because I think there’s a lot to be learned and gained from working with other people in all those ways.”

Introduction to ChatGPT

Moody teaches a course at the University of Michigan titled Introduction to ChatGPT. The course description states that the class, through readings and discussions, will consider how ChatGPT might shape notions of authenticity, authorship, citation, editing, and plagiarism. Students will also experiment writing with ChatGPT in all stages of the writing process.

“It arose out of noticing in my classes that students were using ChatGPT,” Moody explains, “So, I decided, okay, I’m going to investigate this further.” Each week of the mini-course is framed around an ethical question that AI raises. For example, discussing environmental impacts, working conditions of those involved with AI, and whether or not the use of AI in schools should be considered cheating.

“The purpose of the course is definitely not to give full-threaded support of ChatGPT. I think it’s actually quite problematic,” Moody says.

During the lifetime of this course, Moody has noticed an apparent shift in the attitude towards AI within their students. “When I first started this, most of the students were either super excited about AI, or they had never used it. And every semester since, I have found that students are less and less excited about AI.”

Moody also mentions the effects that teaching this course has had on the way they approach other courses at the university. “I do think that my assignments lean in more than they used to to that human element of writing in order to connect, or in order to make sense of the world.”

AI’s impact

“Generative AI is a huge problem in a lot of ways,” says Moody, “not just for classroom writing classes or academic writing classes, but actually for our society in all kinds of ways.”

AP (Associated Press) News summarizes AI’s effect on the environment. AI is largely powered by data centers, which rely on fresh water to stay cool. Larger centers can consume “up to 5 million gallons a day.” Jon Ippolito, professor of new media at the University of Maine, created an app showing the energy consumption estimates of certain AI-related activities. The app estimates that a simple AI prompt like “What is the capital of France?” uses 23 times more energy than the same question typed into Google, 0.0003kWh. A complex prompt like “Tell me the number of gummy bears that could fit in the Pacific Ocean,” is estimated to use 210 times more energy than a google search.

Reporting to PBS, Financial Times’ climate reporter Kenza Bryan says “For the moment, there’s very little regulatory scrutiny of data center energy use.” She states that in the near future, it is unlikely that any specific regulations will be imposed against data centers either, meaning that the use of AI will continue to hurt the environment as long as we continue to use it.

“There are people who profit off of our use of AI and ChatGPT. There are people who suffer because of our use of ChatGPT and AI,” says Moody, “There is an environmental consequence to using ChatGPT and AI, so I advise my students to keep that in mind as they’re thinking about if and how they want to use it.”

On the other hand, some generative AI advancements are providing a positive impact to communities. For example, AI scribes are receiving a warm welcome in all kinds of health systems. According to The Permanente Medical Group (TPMG), generative AI scribes not only saved physicians an estimated 15,791 hours of documentation time but also improved patient-physician interactions and enhanced doctor satisfaction.

Jodyn Platt, U-M Associate Professor of Learning Health Sciences, says, “It’s predicting deterioration of sepsis in patients, it can predict whether people will show up to their appointment or not, it can predict who’s going to pay their medical bill or not. It’s kind of everywhere, more so every day.”

But, with this advancement comes additional ethical considerations. Platt discusses an AI tool in health care that helps clinicians write emails to patients and communicate with them. “To what extent do you disclose the use of that tool?” she asks.

This consideration is the same for students. To what extent should they disclose their own use of AI and when should it be permitted?

How should AI be regarded going forward?

In the classroom, it can be tough to determine what steps should be taken against AI usage. Many people debate on whether AI literacy should be a topic of discussion with students or its existence should be ignored entirely.

“I think it’s really important for students to understand what GenAI is, how it works, and what situations it’s appropriate to use it in,” Bennett says. She recalls giving students an example of a question then the answer that GenAI gave and asking them to evaluate the kind of errors that it can actually produce, showing AI’s unreliability firsthand.

Platt says that in medical education, professors are grappling with two questions. How do you use this tool to help people get information faster and more accurately? And, how do you also train them to ask the right questions, to know when the system is wrong?

“Talk about it and be transparent about how you’re exploring it and learning from it.” Platt suggests. “My hope is that AI can take us to a place where we are asking better questions.”

“I think I’m going to continue to lean into the humanity of writing, the act of creative expression, which is a uniquely human endeavor.” Moody says. “I will continue to situate writing as a human activity for the purposes of reflection and thinking and connection, and those are things that Chat cannot replace.”

Feature photo, Computer with ChatGPT; Photo Credit, Lexi Davis